The way people get information has changed more in the past year than in the previous twenty. That’s the 🔥 headline from the new 2026 AI Search Playbook from AirOps, and I’m seeing it firsthand.

The report pulls research from 15M+ AI queries to reveal four plays that drive visibility and pipeline from ChatGPT, Gemini, and Perplexity. They found that 85% of brand mentions in AI search come from third-party sources, not your own. Freshness is critical: 70% of AI-cited pages were updated within the past year.

Get the framework, the data, and the 90-day action plan here.

Over the next two months, I’m going to share an in-depth playbook for launching and growing an AI-native business.

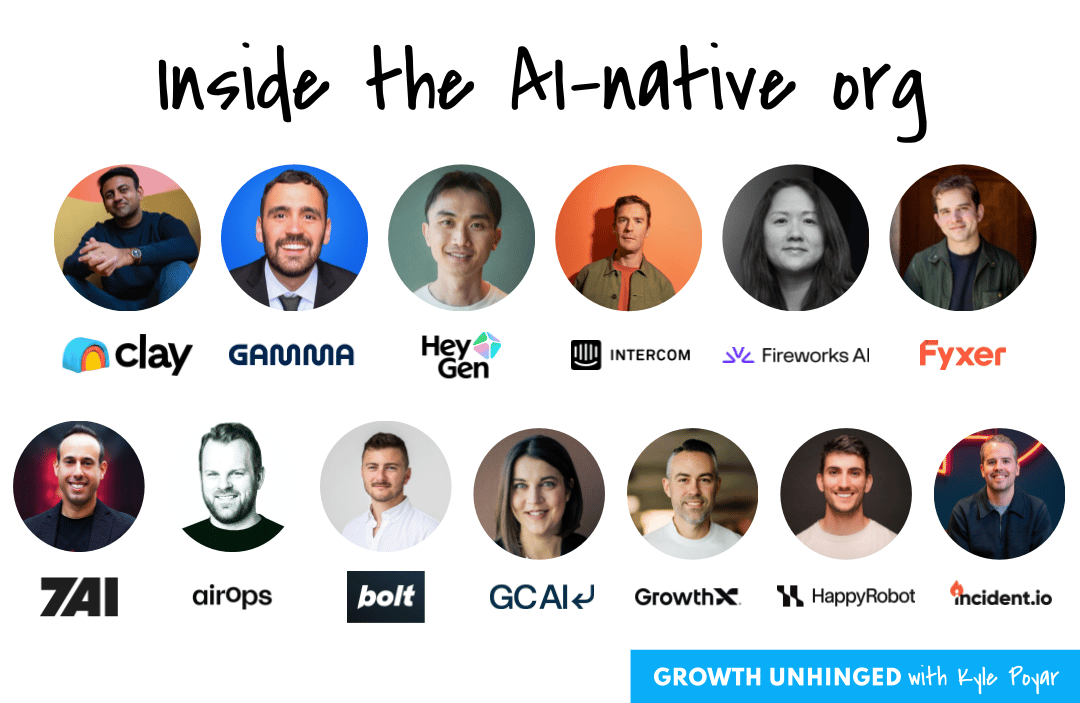

This series is the result of more than a dozen 1:1 interviews with founders from breakout AI-native companies. Some have already scaled to $100M ARR and beyond – including Clay, Gamma, HeyGen, Intercom (Fin.ai), and Fireworks AI. Others are fast-growing AI-native startups who are well-positioned to join them including 7AI, AirOps, bolt.new, Fyxer, GC AI, GrowthX, HappyRobot, and incident.io.

An overview of the series:

Part 1: A guide for reaching $1 million ARR (TODAY)

The conversations woke me up to just how different AI-native businesses are compared to B2B software, along with things that haven’t changed. Here’s a preview of some of the more interesting takeaways:

PMF isn’t gradual in AI.

AI-native companies reach $1 million ARR twice as fast as B2B SaaS.

Price sensitivity drops dramatically when AI does real work.

Product success hinges on AI performance crossing a reliability threshold.

Explicit user feedback (thumbs up/down) can be surprisingly insightful on its own.

AI companies tend to delay hiring their first AE until about $2-5M ARR.

The winning GTM motions combine bottom-up discovery with top-down sales.

The best GTM teams look more like consulting firms than SaaS sellers.

A huge thank you to: Alexander Berger (COO of bolt.new), Archie Hollingsworth (co-founder of Fyxer), Cecilia Ziniti (co-founder of GC AI), Des Traynor (co-founder of Intercom, Fin.ai), Jon Noronha (co-founder of Gamma), Joshua Xu (co-founder of HeyGen), Lin Qiao (co-founder of Fireworks AI), Lior Div (co-founder of 7AI), Marcel Santilli (Founder of GrowthX), Matt Hammel (co-founder of AirOps), Pablo Palafox (co-founder of HappyRobot), Stephen Whitworth (co-founder of incident.io) and Varun Anand (co-founder of Clay).

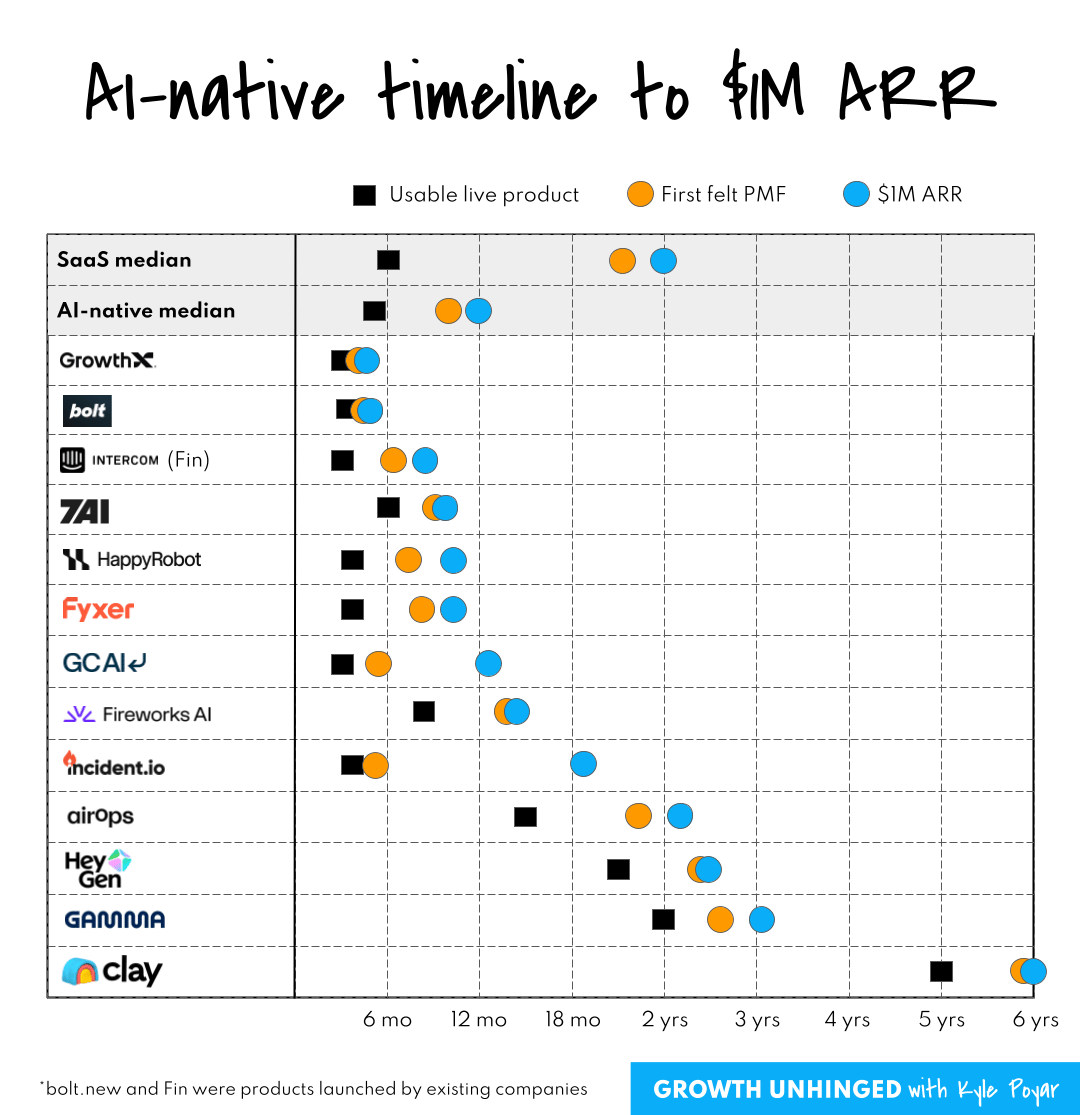

Typical times to get to $1M ARR

Lenny Rachitsky found that it took successful SaaS companies roughly two years to feel product-market fit (PMF) and reach their first $1 million in ARR. Some notable exceptions – including Figma, Airtable, and Slack – took four or more years to feel PMF.

Much of this time was spent gradually iterating on the product with alpha and beta testers. Finding PMF was an artisanal endeavor; it was usually careful, steady, gradual, and incremental. In fact, it was so gradual that many SaaS founders never fully felt they achieved PMF.

The biggest change for AI-native companies is the speed. Everything has compressed. Timelines are twice as fast for top AI-native startups compared to the best SaaS companies.

The median AI-native startup grew to $1 million ARR just 12 months after they started building. bolt.new exploded to $4M ARR in only four weeks post-launch. GrowthX hit $1M ARR only three months after founding the company. The market was ready to buy, even if the product might still be a work-in-progress.

“The core principles of building a legendary company haven’t changed. But it’s all way faster now. Feedback loops are faster. People can build software more quickly so there’s more competition. You need to execute faster.”

What PMF feels like for AI-native products

One surprise from my interviews was how binary PMF tends to be for AI-native products.

PMF was often so obvious that it was inarguable. Founders either felt extreme market pull or they didn’t. The harder part was keeping up with demand and productizing as much of the experience as possible.

“We first felt PMF on day one. Immediately it was obviously the best product we ever launched. Everything else we built would have a day one pop, then drop off. This just kept accelerating.”

“In 2024 we had a new user channel in Slack. We had to turn it off because there were too many new users. We had high confidence that if the quality was there, people would pay for Fyxer. It was a technical question of whether the quality was there.”

“We had $2 million in revenue and hadn’t even announced the company yet. Growth came from workshops where we would teach people how to compete against us with a DIY approach. I was doing all the sales myself, closing $150k contracts with a 60% win rate with a sales cycle of less than 30 days… We had insane PMF because we already had a service.”

“We initially had a 23-24% resolution rate and we looked at the times when Fin didn’t reach a resolution. We saw a time when Fin went back and forth with a customer seven times. We saw another customer batch together five questions at once; Fin answered all of them correctly. Even when Fin didn’t resolve a ticket, it saved a support rep an hour. We knew the thing was valuable.”

A clear PMF tell: customers are ready to buy and they aren’t particularly price sensitive.

GC AI charges more than 20x the cost of a Claude or ChatGPT subscription. Still people purchase it quickly and without needing a complex sales motion, simply because they’re hooked within the first five prompts when they feel the UI and see the better legal answers.

“At one point 23% of people who took our classes [GC AI teaches classes on its product] paid for the product. There's never been a webinar in the world where 23% of people took the next step, let alone bought. Our customers (lawyers) are smart and can tell right away when the product is worth paying more for. ”

Gamma A/B tested pricing and found that people were willing to pay double the price of a conventional productivity app. The highest price they tested ($20 per month) performed best.

“Willingness to pay looks different for AI products. It used to be really hard to get someone to pay $10 per month for a productivity app. It’s much easier to get someone to pay $20 for AI.”

It’s worth mentioning that some companies, including HeyGen, Gamma, and Clay, still iterated for two or more years before they felt PMF. Clay even took six years.

These outliers were usually founded pre-ChatGPT and tried out multiple product concepts. Prior launches quickly fizzled out. Then they struck gold, and things were obviously different.

“We relied on the signal of how much market pull there was from customers. When you have PMF, you just feel it. It's different.”

“Our previous launches weren’t self-sustaining in terms of signups. Then we saw growth without any marketing. We started spending the whole weekend responding to support tickets, and a lot of the tickets were from people asking how to pay us.”

How to tell whether your AI product works

Product-market fit was usually fairly obvious. The harder problem was figuring out whether the AI product worked consistently and whether it was substantially better than alternatives like Claude or ChatGPT. That would be the key to durable revenue and avoiding the ‘thin wrapper’ problem of AI apps.

HeyGen has a dedicated AI success team responsible for internal evals, data for the evals, and evaluating the HeyGen videos that users share externally.

“If the AI quality is there, people will keep using it. This is the most important metric and a leading indicator for us. But AI quality is very hard to measure and it’s subjective.”

HappyRobot builds tools for customers to evaluate their own AI agents in a way that’s customized to what the agent’s work is. Customers create behavioral North Stars that guide the correct behavior of the agent in production. Agents aren’t only doing the work, they’re also QA-ing the work that gets done.

Founders measured AI performance in four ways: explicit user signals, implicit user signals, adoption signals, and business impact signals.