The most immediate path to growth often comes down to finding (and fixing) hidden revenue leaks.

Often overlooked things like localization, checkout UX, and regional payment methods can make the difference between purchases and abandoned carts. In fact, new research from Cleverbridge finds that 4-in-5 software companies report double-digit cart abandonment in their online sales channel.

Cleverbridge — which manages payments, taxes, and compliance in 240+ markets — surveyed over 1,700 software buyers and sellers for their inaugural Friction Report. Get the full insights here (the report is ungated).

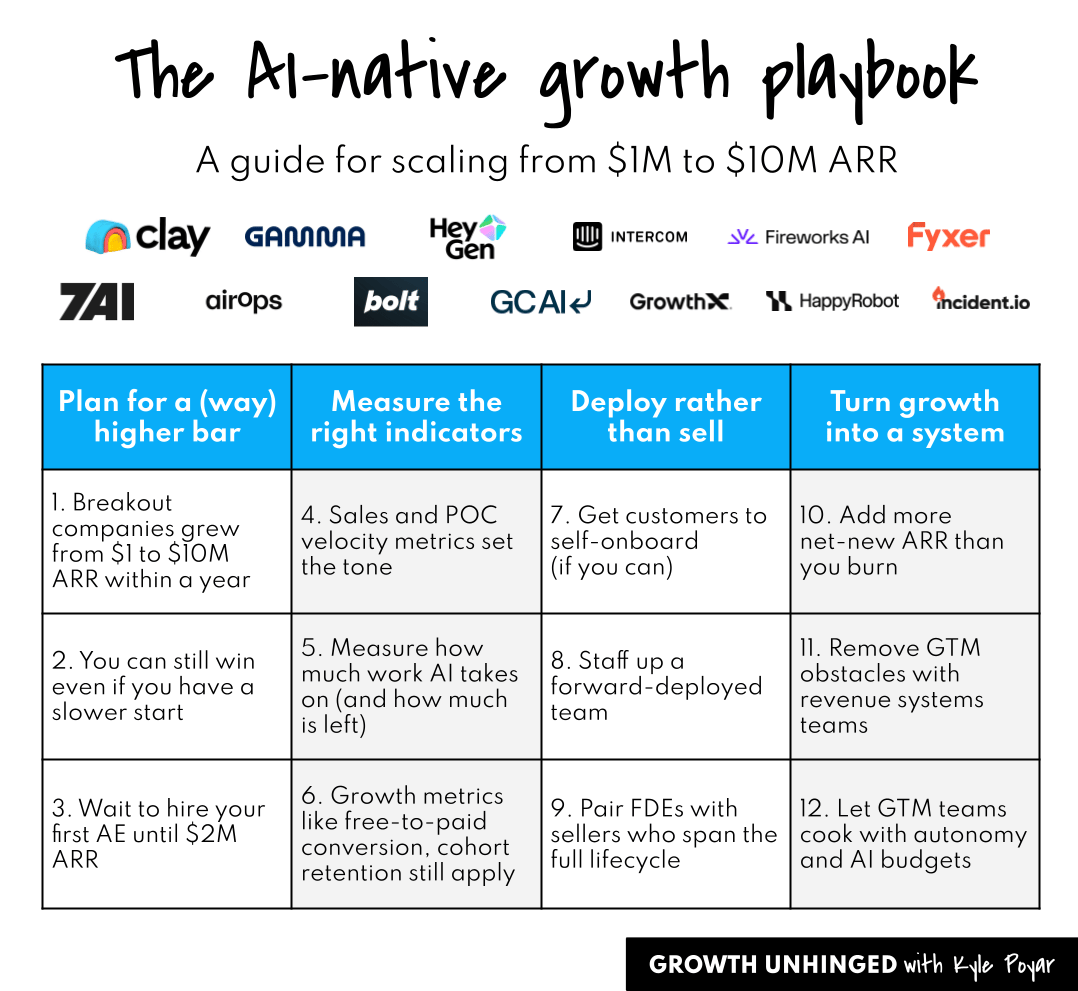

Welcome to part two of my playbook for building an AI-native company. Here’s an overview of the series:

Part 2: A guide for scaling to $10 million ARR and beyond (TODAY)

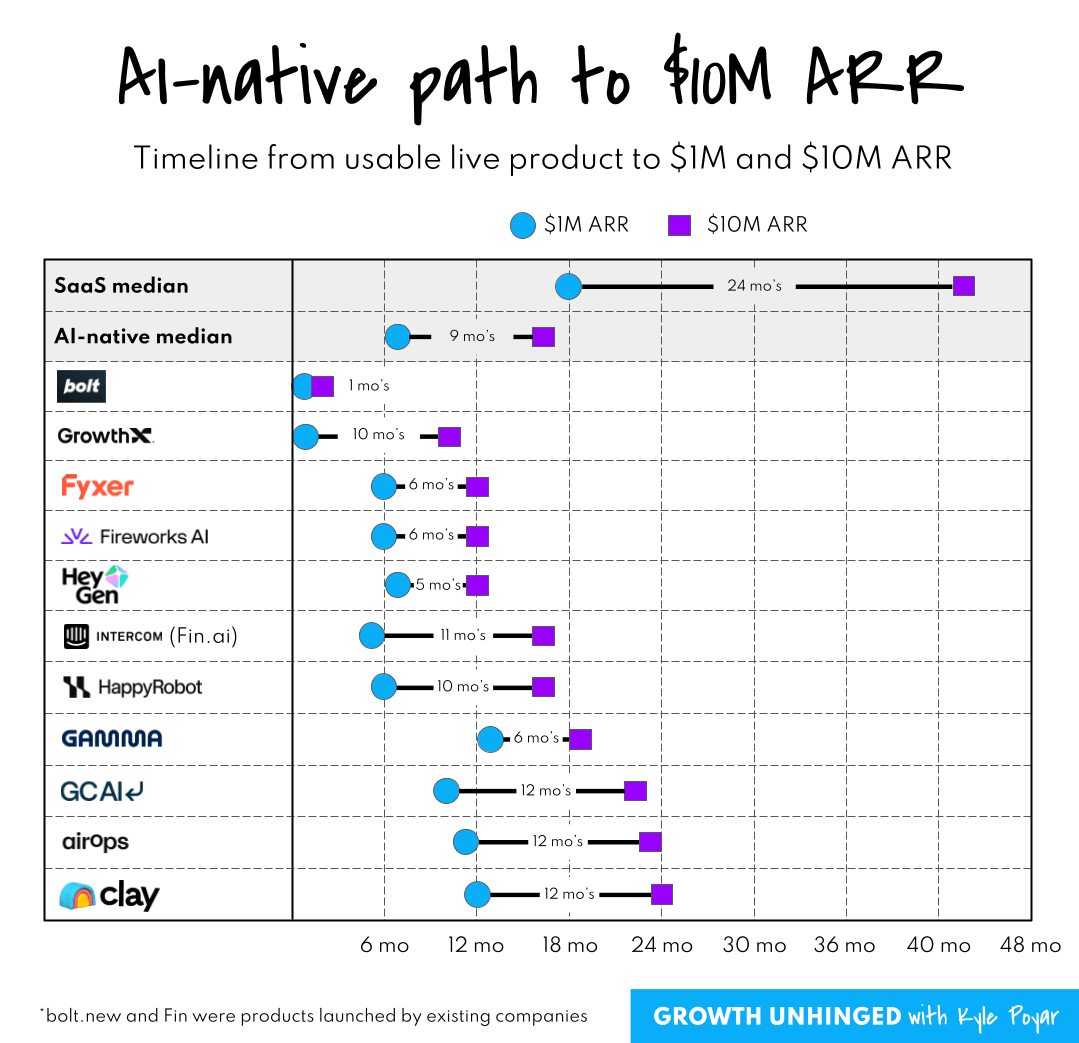

In part one, we covered how product-market fit (PMF) was often binary for AI-native companies. Founders either felt extreme market pull or they didn’t. AI-native companies grew to $1 million ARR only six months after they had a usable live product. The harder part was keeping up with demand and productizing as much as possible.

What happens after the first $1M ARR is even more interesting. It used to take IPO-caliber SaaS companies more than 24 months to go from $1M to $10M ARR. AI companies like Clay, Gamma, HeyGen, and Fyxer are doing it in 12 months or less.

This playbook shows you how. It’s backed by more than a dozen 1:1 interviews with founders from breakout AI-native companies.

A huge thank you to: Alexander Berger (COO of bolt.new), Archie Hollingsworth (co-founder of Fyxer), Cecilia Ziniti (co-founder of GC AI), Des Traynor (co-founder of Intercom, Fin.ai), Jon Noronha (co-founder of Gamma), Joshua Xu (co-founder of HeyGen), Lin Qiao (co-founder of Fireworks AI), Lior Div (co-founder of 7AI), Marcel Santilli (Founder of GrowthX), Matt Hammel (co-founder of AirOps), Pablo Palafox (co-founder of HappyRobot), Stephen Whitworth (co-founder of incident.io) and Varun Anand (co-founder of Clay).

Plan for a (way) higher bar

Breakout companies grew from $1 to $10M ARR within a year

Breakout SaaS companies aspired to the T2D3 path. After reaching $1M ARR, they’d hope to hit $3M ARR in 12 months and then $9M ARR in 24 months.

The typical AI-native company I interviewed grew from $1M to $10M ARR in just 9 months! Nearly all did it in under 12 months. When you factor in the time to build a usable product, AI companies hit $10M ARR before SaaS companies were even at their first $1M.

In some cases, every aspect of company building was accelerated. HappyRobot started building in January 2024. They crossed $10M ARR in 2025, 10 months after reaching $1M ARR. GrowthX started building in October 2024 and announced the company in December 2024. They had achieved $12M ARR a year later.

You can still win even if you have a slower start

At Clay, Gamma, and HeyGen it took 2 or more years to launch a usable live product. StackBlitz had been around for 7 years before focusing on its vibecoding app, Bolt. Intercom was founded in 2011 and spent their first 12 years as a SaaS application; everything changed after launching their AI agent, Fin.

The slower start didn’t matter. Once these companies had PMF for an AI-native app, the business exploded. They went from $1M to $10M ARR in 12 months (Clay), 6 months (Gamma), 5 months (HeyGen), and <2 months (Bolt).

Wait to hire your first AE until $2M ARR

The AI-native companies I spoke with usually waited to hire their first account executive (AE) until they were between $2M to $5M ARR. They instead set up a repeatable GTM motion through either self-serve or founder-led sales before hiring. (In my experience, SaaS companies hired their first AEs as they approached $1M ARR.)

A big benefit of waiting: AI companies didn’t blindly follow the old SaaS sales playbooks. They discovered GTM motions that were consultative, more technical than commercial, blended end-user excitement with top-down AI mandates, and moved fast from first conversation to enterprise deal.

Measure the right leading indicators

Sales and proof of concept (POC) velocity metrics set the tone

If you reverse engineer going from $1M to $10M ARR in 12 months, everything in GTM needs to move faster. Hiring sellers, ramping them, generating pipeline, setting up proof of concepts (POCs), and navigating enterprise procurement all need to be done in weeks or even days.

Some, like GC AI, just didn’t let prior experience slow them down.

“We didn’t know you’re supposed to be slow or that enterprise deals should take six months. And we were selling to legal teams who are not used to buying AI yet. But we just moved fast. The naivete and first principles approach have been huge accelerants.”

Others, like 7AI, found ways to balance speed with trust. 7AI sells an agentic security solution to CISOs at large enterprises. This is definitionally not a category that acts quickly, and trust is critical in the buying process. Many of 7AI’s deals want a proof of concept, and so 7AI closely monitors POC velocity along with the time to close a full production deployment.

“We’re looking at how quickly we’re able to show value and then move to an initial land. Once we show value and cover a customer’s use cases, we’re able to close quickly. With DXC, which has 120,000 employees, we went from initial conversation to full production deployment in 8 weeks.”

As their market gets more comfortable with AI in security operations, 7AI pays close attention to the percentage of opportunities that don’t require a POC. This shows how the product category is maturing and whether they can further accelerate deal velocity.

Measure how much work AI takes on (and how much is left)

Fin, the AI agent for customer service, charges $0.99 per outcome. They only make money when the product works. Resolution rate is Fin’s first-order KPI and it’s measured both internally and with customers. (A resolution happens when, for example, the customer confirms that Fin resolved the issue.)

Resolution rate sounds fairly straightforward. It’s not, and it’s only part of the equation for buyers. There might be additional needs from the customer even if the first issue is resolved. There could be latency or lag-time, hurting customer experience. AI answers could consume more or less tokens. AI could hallucinate. And then there’s a matter of how the AI answers look. I’d venture to guess that the easiest way to game resolution rates would be to tolerate more flexibility for AI to “guess” an answer (aka hallucinate).

Co-founder Des Traynor told me Fin pairs resolution rates with customer satisfaction (CSAT), which indicate whether the answers are great and Fin is keeping customers happy. They’ve now introduced their own vertical model called Fin Apex, which shows fewer hallucinations, less latency, and higher resolution rates compared to general-purpose LLMs.

Des monitors Fin’s total automation rate as well. This measures how much work AI completes relative to the total addressable work in an account. You can think of it as an AI-native share of wallet metric.

“Customers might not expose Fin to certain topics, cases, or support channels like voice or WhatsApp. We measure how much of the support volume we’re getting and what percentage of that work we’re resolving. This shows the total amount of work done for the customer.”

Growth metrics like free-to-paid conversion still apply

AI-native companies still care about classic growth metrics. The most frequently mentioned: paid customer retention on a cohort-basis, free-to-paid conversion, product adoption, usage frequency, demo requests, and direct traffic to the website.

“We care a lot about the frequency of use, specifically the number of days editing per month. It’s a strong leading indicator of retention.”

“The free-to-paid conversion ratio gives us a sense of whether people are getting enough of an aha moment. New users get anywhere from one to five messages before they hit a paywall. Are they able to get to the point where this solves enough of their problem to enter a credit card?”